CS 6620 Fall 2014 - Project 11

Comparison of gamma corrected and uncorrected images: 00:41:54

I ended up playing with gamma correction and having it implemented before it got bumped

to the next project so I put together a comparison. The gamma correction is done by

converting the linear RGB values the

render is computed with to sRGB values, since the sRGB color space includes a gamma correction

term and is a common and well supported color space. The left (brighter) is the corrected image, the right

is uncorrected. Both images were rendered with path tracing and adaptive sampling with a min 128

and max of 1024 samples per pixel.

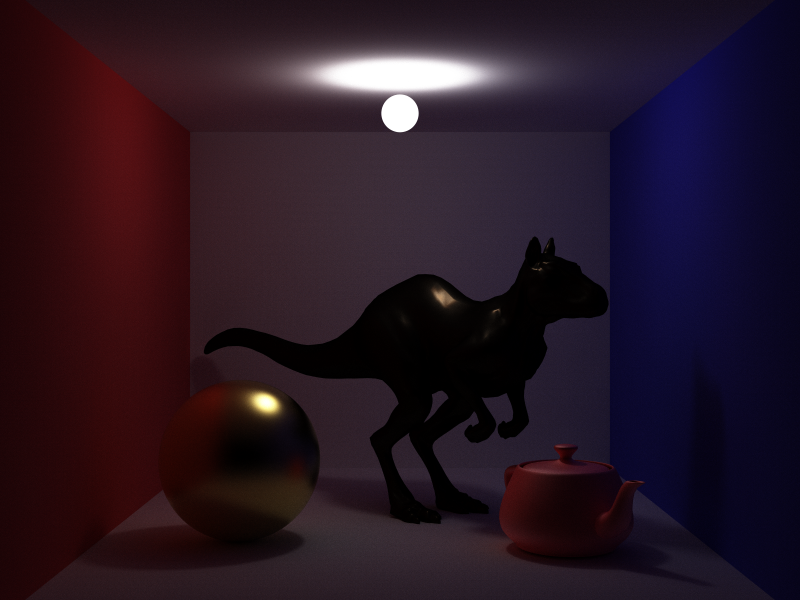

The scene uses the ordered polymesh version of

Killeroo and the high res

Utah teapot. The Killeroo model is using a measured brass material from the

MERL BRDF Database and the sphere uses a measured gold material

from PBRT's material spd files.

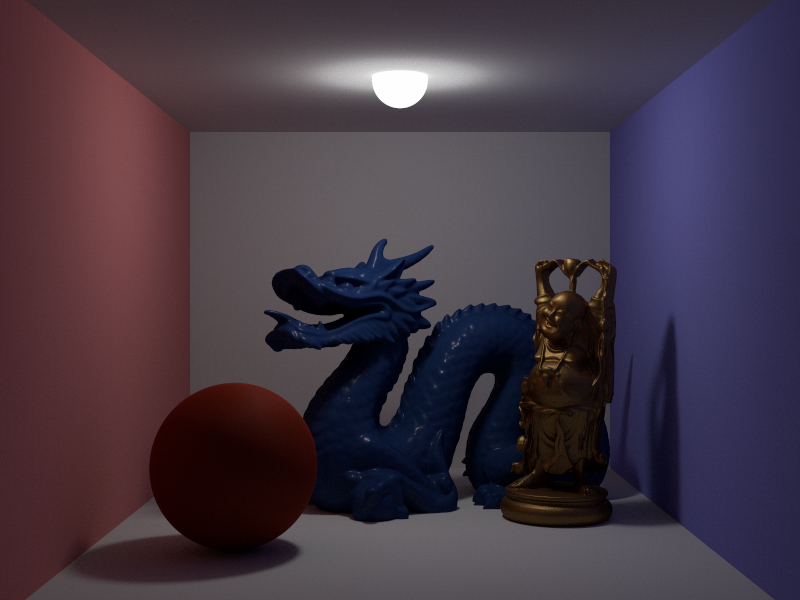

SmallPT Cornell Box w/ MERL BRDFS: 00:52:35

Adaptive sampling: min 128, max 1024

The MERL scene makes use of the Stanford Dragon and Happy Buddha from the Stanford 3D Scanning Repository. The material BRDFS are from the MERL BRDF Database introduced in the paper "A Data-Driven Reflectance Model" by Wojciech Matusik, Hanspeter Pfister, Matt Brand and Leonard McMillan. The dragon uses the blue acrylic BRDF, the buddha uses the gold metallic paint1 BRDF and the sphere uses the red fabric BRDF.

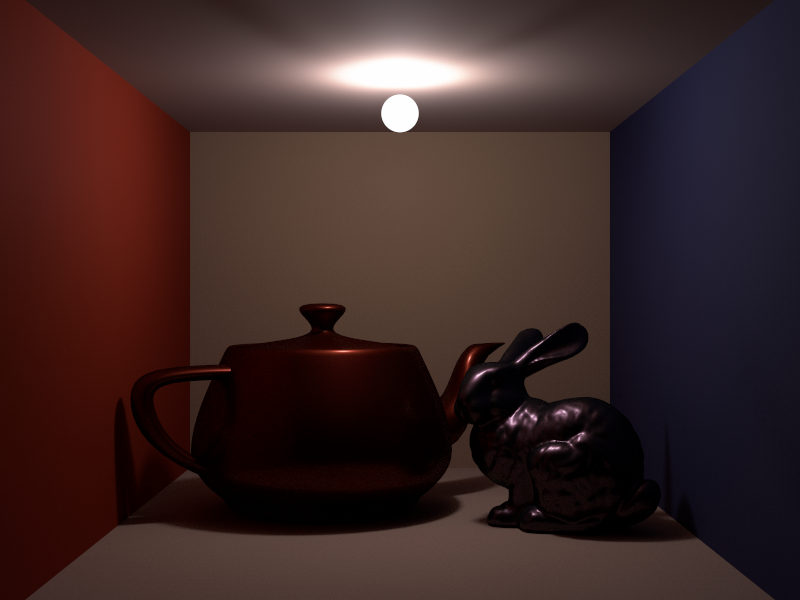

Fabric SmallPT Cornell Box: 00:45:52

Adaptive sampling: min 128, max 1024

The fabric box scene makes use of the Stanford Bunny from the Stanford 3D Scanning Repository and the high res Utah teapot. The material BRDFS are from the MERL BRDF Database. The walls are using the red/white/blue fabric BRDFS, the teapot is using red metallic paint and the bunny is using color changing paint2.

Hardware Used and Other Details

Render times were measured using std::chrono::high_resolution_clock and only include time to render,

ie. time to load the scene and write the images to disk is ignored. Images were rendered with path tracing

using 32 threads with work divided up in 8x8 blocks. A different machine was used to render the images

since my computer at home was out of commission when rendering the scenes.

RAM: Forgot to check

Compiler: gcc 4.9.1 x86_64 (on OpenSUSE)

Compilation Flags: -m64 -O3 -march=native -flto